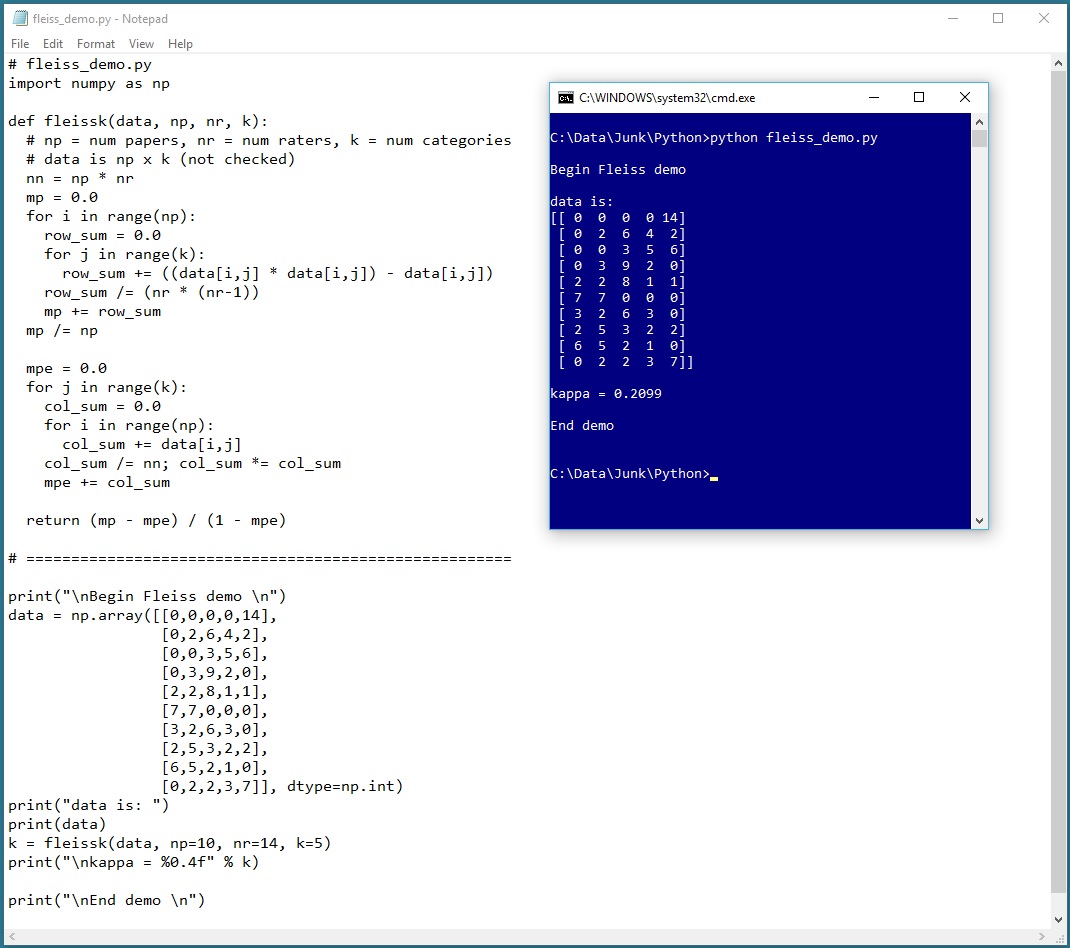

GitHub - Christian-TechUCM/Fleiss-Kappa: Python script that calculates Fleiss Kappa, a statistical measure of inter-rater agreement, on data from an Excel file.

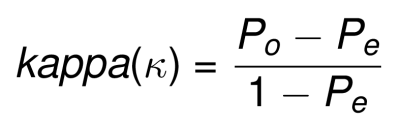

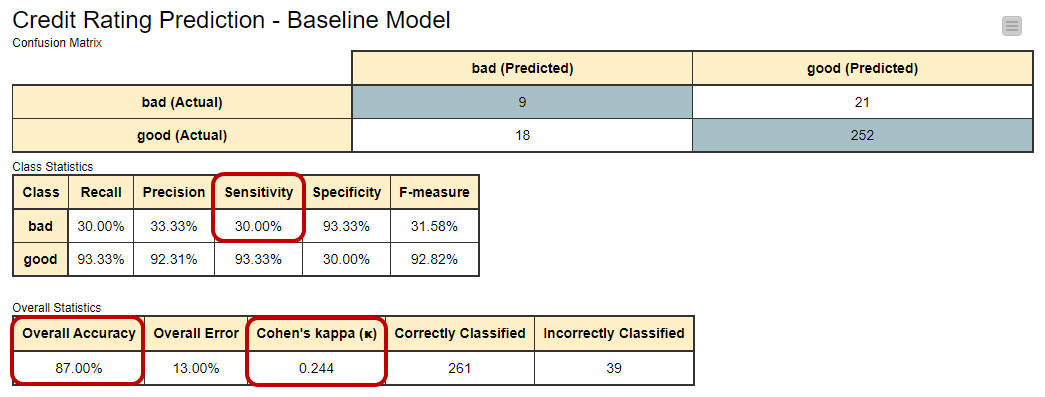

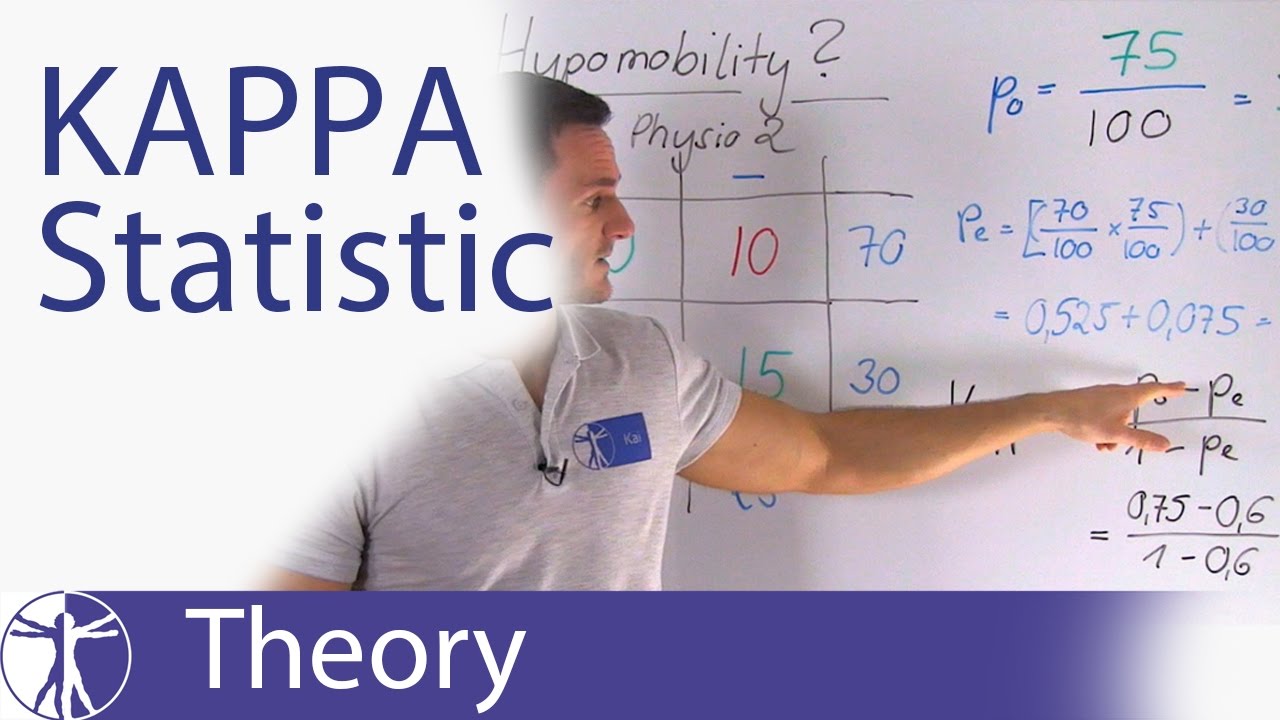

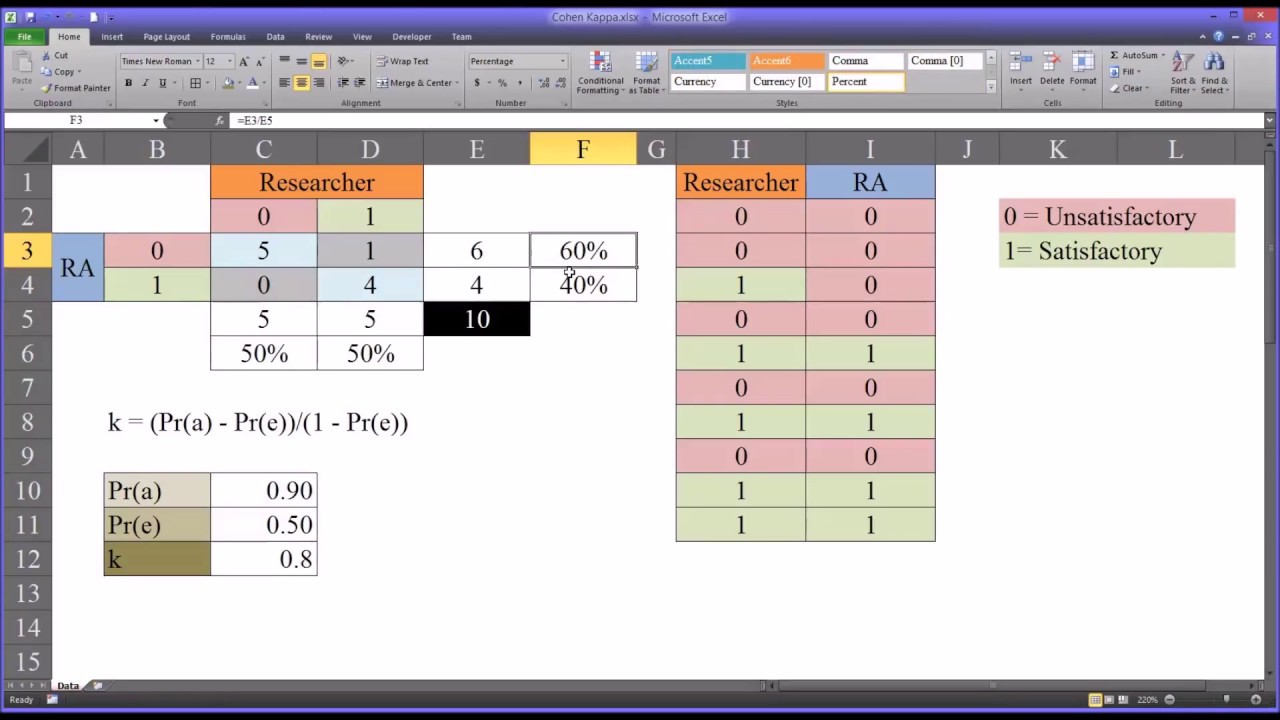

Cohen's Kappa and Fleiss' Kappa— How to Measure the Agreement Between Raters | by Audhi Aprilliant | Medium

Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

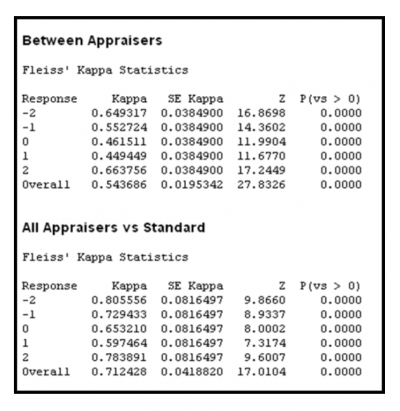

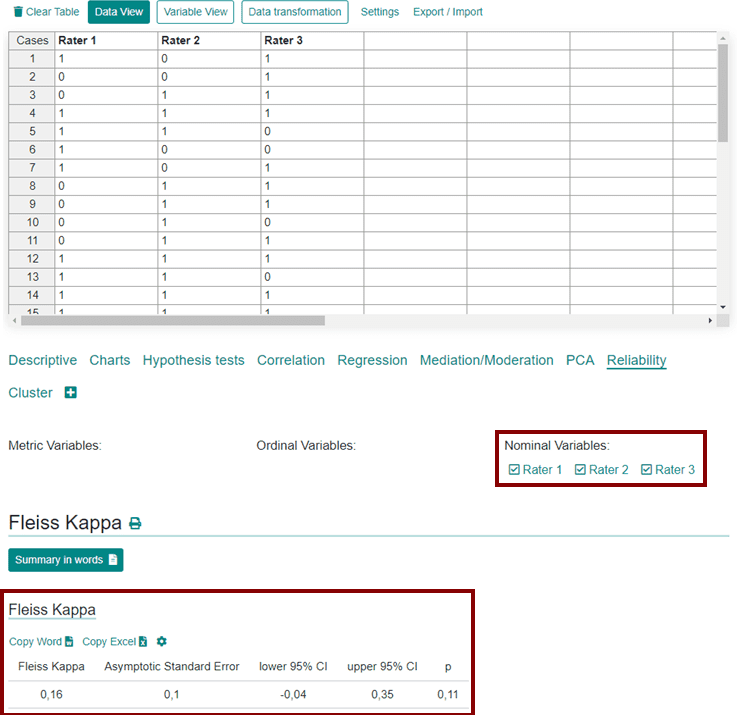

Fleiss' multirater kappa (1971), which is a chance-adjusted index of agreement for multirater categorization of nominal variab

![Fleiss Kappa [Simply Explained] - YouTube Fleiss Kappa [Simply Explained] - YouTube](https://i.ytimg.com/vi/ga-bamq7Qcs/mqdefault.jpg)